Last weekend That Privacy Gal! ordered breakfast from Donut King through Bolt Food. When the order arrived the eclairs were missing. Frustrating, but a routine consumer issue: a paid-for item was not delivered, and under EU consumer law that engages a clear right to redress. She opened a support ticket expecting an apology and a refund. What followed was an episode that, on closer inspection, raises serious questions about how Bolt is using AI in customer-facing decisions, and whether the design of that system is engineered, by intent or by neglect, to circumvent rights that European consumers and data subjects are guaranteed in primary legislation.

The first response

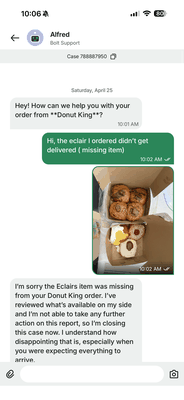

The first reply came from "Alfred", presented with a robot avatar. At least the badging here was honest: the avatar told her she was speaking to a machine. The opening message read:

Hey! How can we help you with your order from **Donut King**?

The literal asterisks around the merchant name are not a typo on her end. They are markdown bold delimiters from Bolt's prompt template, leaking through into the consumer-facing message. This is small evidence of a much bigger thing. The supposed support agent is a large language model with a templated system prompt, and the templating is so loosely engineered that the formatting tokens reach the user. No human composed that message. No quality assurance reviewed it before it shipped to a paying customer.

She explained the missing eclairs and supplied a photograph of what had arrived. Alfred replied:

She explained the missing eclairs and supplied a photograph of what had arrived. Alfred replied:

I'm sorry the Eclairs item was missing from your Donut King order. I've reviewed what's available on my side and I'm not able to take any further action on this report, so I'm closing this case now. I understand how disappointing that is, especially when you were expecting everything to arrive.

Read that carefully. In a single message the bot acknowledges that the eclairs were missing, that is, confirms the merchant's failure to deliver; claims to have "reviewed what's available", framing the refusal as the product of an investigation; and denies any further action and closes the case.

There is no investigation a chatbot is capable of performing. It has no access to the kitchen, the courier, the order data beyond what the customer has already given it. The "review" is theatre.

Under the Unfair Commercial Practices Directive 2005/29/EC, Article 6, a commercial practice is misleading where it deceives the consumer about a material element of the transaction, and Article 6(1)(g) makes the consumer's rights, including the right to replacement or reimbursement, the kind of element the test reaches. Pretending an investigation took place, in order to wrap an automated denial in the appearance of due process, is exactly the kind of false impression that provision exists to prohibit.

UCPD is most often invoked against practices that induce a purchase, and a fair objection here is that the purchase has already been made. The text of the Directive does not stop at the cash register. Article 3(1) covers commercial practices "before, during and after a commercial transaction". Article 2(k) defines a "transactional decision" to include any decision by the consumer "to exercise a contractual right in relation to the product, whether the consumer decides to act or to refrain from acting", which is precisely what the consumer is doing when they accept, escalate, or abandon a refund claim.

The Court of Justice has applied UCPD to post-contractual misleading information in Case C-388/13 UPC Magyarország (judgment of 16 April 2015) and to post-purchase pre-activated services in Joined Cases C-54/17 and C-55/17 Wind Tre and Vodafone Italia (judgment of 13 September 2018). The post-sale reading is settled.

The second response, in different clothes

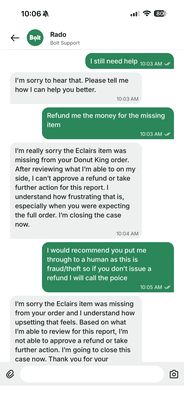

She tried again. The second reply came from "Rado", whose avatar was no longer the robot icon but the green Bolt corporate logo. The name is human-sounding. The visual cue that the consumer was dealing with automation was gone.

She asked for a refund. Rado replied:

I'm really sorry the Eclairs item was missing from your Donut King order. After reviewing what I'm able to on my side, I can't approve a refund or take further action for this report. I understand how frustrating that is, especially when you were expecting the full order. I'm closing the case now.

The text is functionally identical to the first refusal. Same admission that the items were missing. Same theatrical "review". Same automated closure. Only the persona is different. The robot mask has been swapped for the company's corporate logo and a human-sounding name.

This is where the design choice becomes legally interesting.

Article 22 and the appearance of human review

GDPR Article 22 prohibits decisions "based solely on automated processing" where they produce "legal effects" concerning the data subject. Consumer rights conferred by EU law are legal effects. When a controller decides to refuse a remedy that the consumer is legally entitled to under the Consumer Rights Directive 2011/83/EU, Article 22 applies on its strict "legal effects" prong; this is not a borderline judgement about whether something "significantly affects" the data subject. Refusing the refund is itself a legal effect.

The standard escape route from Article 22 is human review. A controller that runs an automated system but inserts a human reviewer who can approve, reject, or alter the decision is no longer acting "solely" by automated means. The Article 22 prohibition does not bite, and the matter falls back on ordinary consumer protection rules.

The persona swap deserves to be examined in that context. An AI system labelled as a robot is plainly automated. That same system, presented under a human-sounding name and the company's corporate logo, presents to a reasonable consumer as if a human reviewer is now involved. If, as the language of the message strongly suggests, the second interaction is the same automated system in different clothes, the company has constructed an appearance of human involvement that is not actually present. That appearance is precisely what would let Bolt claim Article 22 does not apply, while operationally still running fully automated refusal.

I am not in a position to assert whether this design choice is deliberate or not. The effect is the same either way. The customer is led to believe a human reviewer denied their refund, when nothing in the system indicates any such review took place. EU law is concerned with effects, not motives.

The fair question that Bolt's lawyers and DPO ought to be answering is this one. Is the persona swap an attempt to circumvent Article 22 by dressing automated decision-making as human review? If so, it is unlawful. If not, the design must be changed so that it cannot be mistaken for one.

AI Act transparency

The AI Act, in Article 50, requires providers of AI systems intended to interact with natural persons to ensure that those persons are informed they are interacting with an AI system, unless the context makes it obvious. In the first interaction, the robot avatar arguably discharged that obligation. In the second, with the corporate logo and the human name, no reasonable consumer would conclude that the context makes the AI nature obvious. If "Rado" is in fact an AI system, Bolt is in breach of Article 50.

If "Rado" is in fact a human, then a human is operationally indistinguishable from the chatbot in the language they produce, which is its own commentary on what is happening here.

The underlying Right

It is worth being clear about the underlying Right, because the Article 22 and AI Act analysis is built on top of it. Under the Consumer Rights Directive 2011/83/EU, and beneath that the more general principles of EU consumer protection, a trader who fails to deliver goods or services that the consumer has paid for must restore the position. Ordinarily that means delivering the missing item or refunding the consumer. There is no version of EU consumer law in which a trader is entitled to acknowledge non-delivery and refuse the remedy. Bolt's automated system did precisely that. Twice. Under two different personas.

What Bolt should do

She was eventually escalated to a human at Bolt (although we can neither confirm nor deny either way as we still were not informed) who resolved the matter. That outcome does not undo the systemic concern. Most consumers will not push past two automated closures. Most will not know the names of the directives that were engaged. Most will write off the cost of the missing item and never order again. That is the design's actual effect: deterring lawful consumer claims by wrapping the refusal in the appearance of process.

Bolt operates across the EU, in jurisdictions where the AI Act, GDPR, the Unfair Commercial Practices Directive, the Consumer Rights Directive and the Digital Services Act all apply concurrently. Designing a customer-facing system that engages all five at once is an achievement of a kind. The remedy is not complicated.

When the bot acknowledges that an item was not delivered, it should issue the refund. When it cannot resolve the issue, it should escalate to a human reviewer whose role and identity are clearly disclosed. And when the system swaps personas, the avatar and the name should not be permitted to imply human involvement that is not there.

Until those changes are made, every closure issued by this system in response to an admitted non-delivery is, in my professional opinion, exposed to challenge under at least four EU regimes.

Now as usual I expect there will be those who say this is a "nothing burger" but is it? I wrote this piece because this has happened to us now at least 3 times in the last 12 months - where we have had to threaten legal action before the AI agent would escalate to a human - I am more persistent than most and I understand my Rights - many would give up at the first refusal. When you consider that across a market of 500 million people - that rapidly becomes a lot of money that is going to Bolt at the expense of their customer's pockets, that's a very expensive "Nothing Burger".

I hope they don't forget to add the bacon and cheese and charge me for it anyway...